Platform

The Biggest Blocker to Open Banking Success? Slow, Risky Data

New Pulse Q&A research shows less than 5% of European banks are fully prepared for open banking.

Kobi Korsah

Jun 16, 2021

Share

In a recent interview with CNBC, JPMorgan Chase CEO Jamie Dimon made this remark when asked about the threat posed by fintech and tech giants' exponential growth in banking: "We should be scared s---less. We've just got to get quicker, better, faster.”

Consumers have come to expect fast, accessible, convenient payments using online and mobile platforms, and traditional banking is falling to the wayside in favor of open banking. Open banking is the practice of enabling secure interoperability while maintaining the principles of customer centricity, security, and trust. This practice grants third-party providers (TTPs)—often fintech startups—open access to financial data from banks and other financial institutions to create new products and services in partnership with the incumbent banks.

In 2007, the original Payment Services Directive—or open banking as it’s also known—went into effect to create a unified payment market in the European Union. This eventually forced the UK’s nine biggest banks to release data in a secure, standardized way, so data could be shared more easily between organizations. Fast forward to 2021, the European Banking Authority passed the Strong Consumer Authentication (SCA)—a PSD2 requirement—for payment service providers to make online payments more secure and prevent financial fraud. In the UK, the enforcement deadline has been extended six months from September 14, 2021 to March 14, 2022.

While the passing of PSD2 SCA is a watershed moment and a loud wake-up call for retail banking and financial services industries, readiness continues to confound financial institutions across the region.

Only 2% of EU Banks Meet PSD2 Requirements

Open banking is being adopted at a varied pace from Canada to Australia, as the map shows below. For example, while U.S. authorities have not mandated open banking yet, they have blessed it and allow TPPs to gather information, fuelling the rise of fintech beacons, such as Stripe and Square. So far, it's been up to the market to decide what to do, but there are strong signs that will change soon.

A Pulse QA study commissioned by Delphix found less than 5% of European banks are fully prepared for SCA and compliance with previous PSD2 mandates. A staggering 69% of businesses have implemented less than 50% of PSD2 requirements. While 30% of senior IT executives state that a focus on payments is mission-critical, this area of rapid innovation is working out far better for the newer players. The reason?

Traditional banks and financial services organizations collect vast amounts of data, but using that data for innovation can be challenging. Data exists throughout disparate systems and is often siloed in different departments and not easily accessible. Core banking systems—anchored by mainframes in dire need of modernization—forbid organizations to make innovative approaches to everything from accounts, to payments, to loans processing and more. Added to that, security concerns and strict data privacy regulations stifle the ability to move and share data.

Swedish open banking platform, Tink, found that 41% of 450 E.U. banks have failed to provide a sandbox for third-party service providers by the deadline. In May of last year, another study in the UK found that half of the banks offering API availability did not respond to connection requests from TPPs. Nearly 4 out of 10 banks did not respond to the need for TPPs to test APIs.

Forty-six percent of survey participants interviewed by Pulse Q&A say banks don’t allow API scenario testing with real data, and 37% of sandbox environments look nothing like live ones. Furthermore:

29% of developers and testers must rely on Ops teams to provide and refresh their data environments manually

42% rely on synthetic or subsetted data—which do not guarantee real-time delivery of current, full-some, or real data

60% say data protection challenges hamper projects

Traditional test data management tools are simply not up to the task of wrangling data from across a multi-generational technology stack. Instead, they make it challenging for enterprise teams to achieve integration testing to ensure composite apps, micro-services, and internal APIs work seamlessly together.

Continuous Testing Becomes the Mother of all Challenges

DevOps and CI/CD – which many of these teams still aspire to – demand continuous testing. Automated code and infrastructure shine a spotlight on the lack of access to data-ready environments. Several years ago, when waterfall was all the rage, having five pre-production environments and taking six months to refresh test data was acceptable. Then Agile increased parallel releases but not pre-production environment availability. Suddenly test-bed setup and refresh became time-critical and exposed flawed processes and bottlenecks.

In order to safely participate in open banking practices, banks need access to fresh, compliant data for development and testing of new APIs and of current and new applications that rely on the inter-bank shared data. Otherwise, they risk losing ground in a transformed industry. Of course, there is still the matter of hefty fines of up to 4% of turnover for non-compliance with regulations, such as the GDPR.

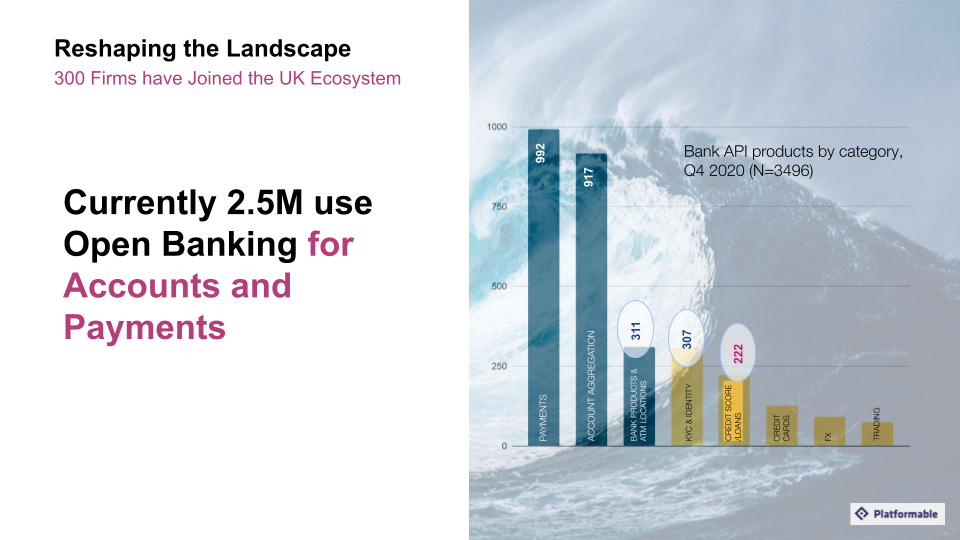

The Open Banking Implementation Entity (OBIE) reports that 2.5 million consumers and small and medium enterprises (SME) in the U.K. use open banking-enabled products to manage their finances, access credit, and make payments. As the chart shows below, some advanced use cases that have seen less traction can be potentially transformative for banks.

For example, astronomical sums are still being spent on processing and underwriting credit risk, so that banks can be confident in their lending decisions. Loan processing teams still manually examine physical bank statements to assess a client’s creditworthiness for a mortgage. Recently, my wife and I had to provide physical copies of three months' bank statements on three separate occasions when we needed to replace our first choice property with our second after the vendor pulled out during the initial transaction. That bad experience was further impaired by the lender’s stringent manual processes, which could not seamlessly accommodate the switch. In situations like that, more sophisticated use of bank data within the customer onboarding or application stage could provide real insights for faster decisions and improve experiences. It could also slash the odds for fraudulent activity.

Keeping Up With the $5.5 Trillion Fintech Market Requires Access to Fresh, Compliant Data

Open banking is part of the API groundswell described by Gartner as “the API economy—an enabler for turning a business into a platform." Ultimately, open APIs allow businesses to monetize data, forge profitable partnerships, and open new paths for innovation and growth. And, McKinsey’s characterisation of APIs as "connective tissue linking ecosystems of technologies and organizations” signals the extreme importance of high-quality continuous testing and QA at the heart of the API lifecycle. And APIs require a consistent, unified and automated approach to data.

In the next installment of this blog series, we’ll explore how deploying programmable data can:

Accelerate integration, unit, validation, performance testing

Reduce time to market with open APIs through rapid, assured, automated delivery/refresh of privacy-compliant data-ready environments across pre-prod

Comply with GDPR, CCPA, PCI without limiting access and avoid sanctions

Watch “How to Surf the Open Banking Tsunami” to learn more about enabling faster delivery of robust, innovative, products and services and keep up with the evolution of fintech.

/f/137721/166x180/9f4181b0ef/icon-platform-v2-2x.png)

/f/137721/88x80/cfd2ecb569/icon-pillar1-v2-2x.png)

/f/137721/88x80/c59573b73a/icon-pillar2-v2-2x.png)

/f/137721/88x80/1804a57e46/icon-pillar3-v2-2x.png)

/f/137721/2121x1414/1558173d32/istock-1282549601.jpg)

/f/137721/2308x1298/60ce2063b6/istock-990359404.jpg)

/f/137721/2121x1414/cce3bb2b02/istock-1264974647.jpg)

/f/137721/2120x1414/89ef7199c4/istock-1191550257.jpg)